A content audit that knows exactly what rule it’s enforcing.

The Problem

Product changes, rebrands, and positioning updates happen fast. The content doesn’t always keep up. Feature names change; old copy stays live. New positioning rolls out; the help center still says something different. AI-generated copy makes it to the website without anyone catching the “leverage” or the Title Case headline.

Manual audits are expensive, slow, and incomplete. They catch the most obvious gaps and miss the systematic ones. And they don’t scale — a quarterly sweep might touch 40 pages when the actual surface area is 400.

As the sole UX writer on Muck Rack’s content team, I needed a way to audit at scale, with enough specificity that whoever fixes the copy knows exactly what changed and why.

The Fix

The Messaging Audit Skill is a product marketing tool that scans Muck Rack’s external-facing content and surfaces every gap between what the brand actually says and what it’s supposed to say. It checks against two knowledge bases:

Muck Rack Messaging Platform: Value pillars, product positioning, persona messaging, taglines, package descriptions. The authoritative version lives in Guru; the skill checks there first.

Brand Writing Guidelines: Voice, tone, anti-AI-ism rules, capitalization, sentence case, AP Style, accessibility standards, channel-specific tone. Everything the brand cares about at the word level.

It scans multiple content surfaces — everything from the marketing site to internal knowledge cards and help center documentation.

Scope of work.

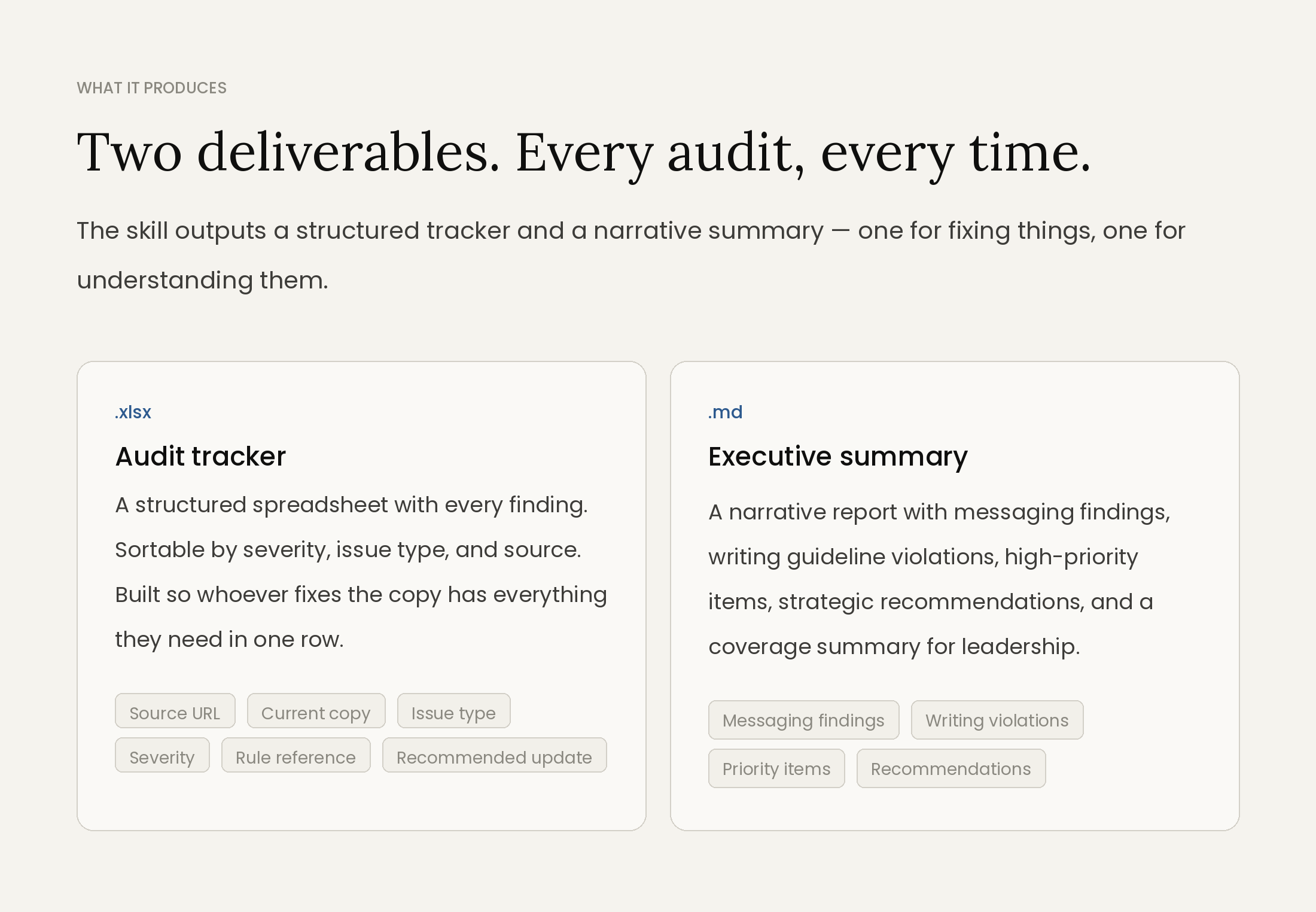

- Designed the audit logic structure: eight issue types, three severity levels, two source-of-truth references, and four content surfaces — and made sure each finding format was actionable, not just descriptive.

- Authored the skill’s evaluation criteria for every issue type, translating the messaging framework and writing guidelines into machine-readable detection rules.

- Built the output schema for the audit tracker: column architecture, field definitions, and sort/filter logic optimized for the person doing the fixes, not just the person running the audit.

- Configured Guru connector integration so the skill always checks the live version of both reference documents before falling back to bundled copies.

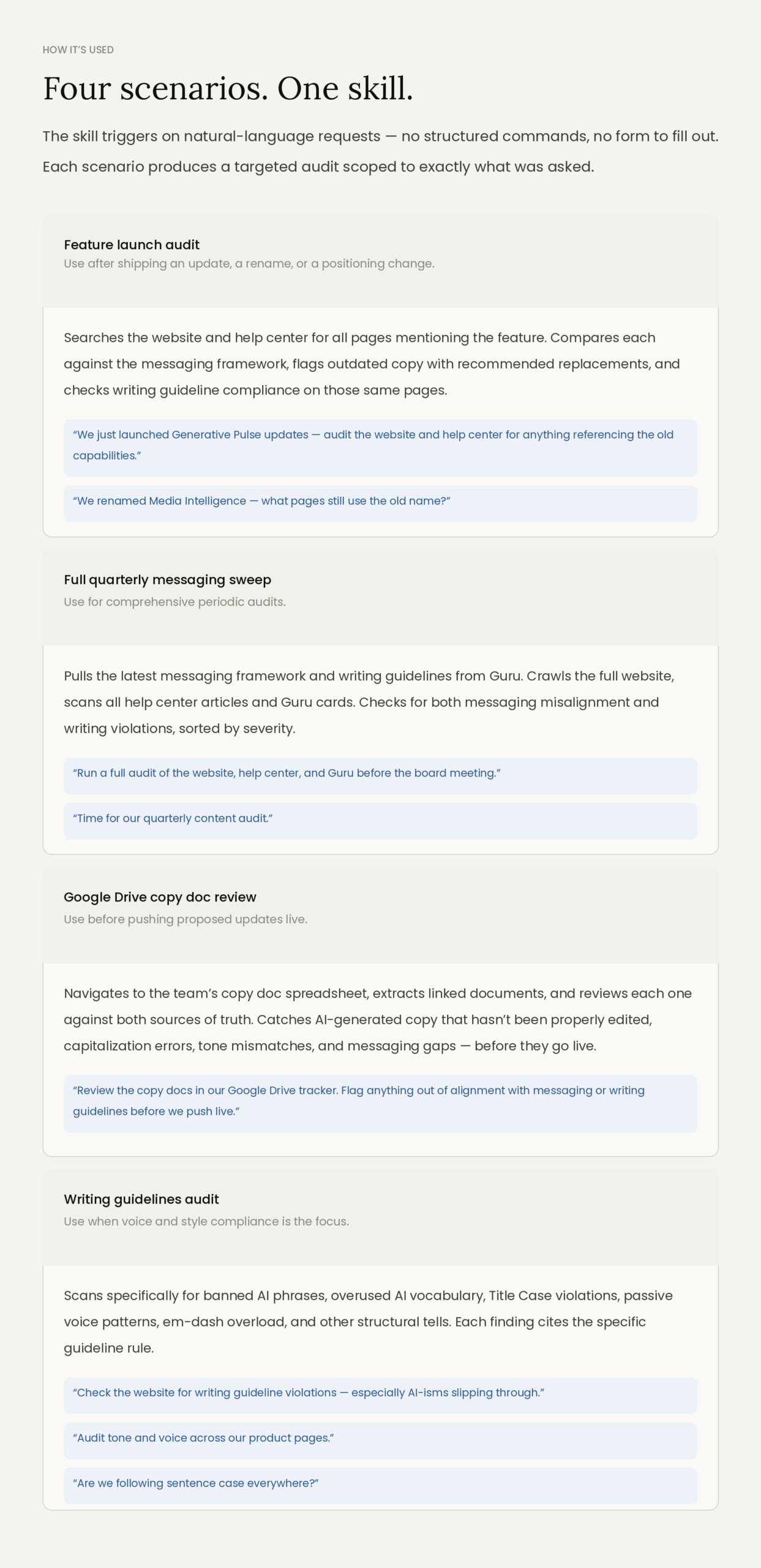

- Designed the four usage scenarios and their trigger language, ensuring the skill responds correctly to natural-language requests without requiring structured commands.

- Wrote the install guide and usage documentation for the team, including prerequisites, connector setup, and worked examples for each scenario.

- Tested the skill across all four content surfaces and iterated on detection accuracy, reducing false positives on stylistic edge cases.

Outcomes.

- Audits that cover the full surface area: Four content surfaces in a single run, versus the 40-page manual sample that used to represent a “full” audit.

- Findings tied to specific rules: Every flagged item cites the framework section or writing guideline it’s violating. No ambiguity about what the fix should be.

- Copy reviews that happen before go-live: The Google Drive scenario catches AI-generated copy and messaging gaps in the tracker, not in production.

- Messaging discipline becomes a system: The skill enforces consistency regardless of who wrote the copy or how long ago it was published.