Building the internal marketing agency that never sleeps.

The Problem

Muck Rack’s messaging framework is sharp and well-defined — but it lives in documents. The gap between “we have a brand voice guide” and “every piece of content actually sounds like us” is where most B2B SaaS brands quietly fall apart.

As the sole UX writer on a six-person team, I sit at the intersection of content design, product, and brand. When marketing needs to move fast — spinning up a LinkedIn campaign, building out an email sequence, expanding a blog post into a full distribution kit — the institutional knowledge required to do it right doesn’t scale through a PDF.

The challenge wasn’t creativity. It was consistency. How do you make the brand knowledge portable, enforceable, and usable by anyone on the team?

The Fix

I built the Muck Rack Brand Engine — a custom Claude skill that encodes the brand’s messaging framework, persona playbook, channel specifications, and anti-AI-ism guardrails into a single, deployable system.

It’s a trained context — the kind of institutional knowledge that usually only exists in the heads of people who’ve been at the company for years.

The skill operates in five modes, each purpose-built for a specific marketing workflow. Under the hood, the skill draws from four interconnected reference files: a messaging framework, a persona playbook, channel specs, and an anti-AI-ism guide packed with banned phrases and the structural patterns they produce. Before every output, the skill runs a mandatory self-review: scanning for “here’s” throat-clearing, banned contrast constructions, Title Case violations, and the vocabulary of AI-generated mediocrity.

Scope of work.

- Audited Muck Rack’s existing messaging framework, brand guidelines, and channel documentation to identify what was portable and what needed to be rebuilt for AI context.

- Authored four interconnected reference files — messaging framework, persona playbook, channel specs, and anti-AI-ism guide — structured for AI consumption, not human browsing.

- Designed the five-mode operating structure and wrote the behavioral rules, self-review process, and output format specifications for each mode.

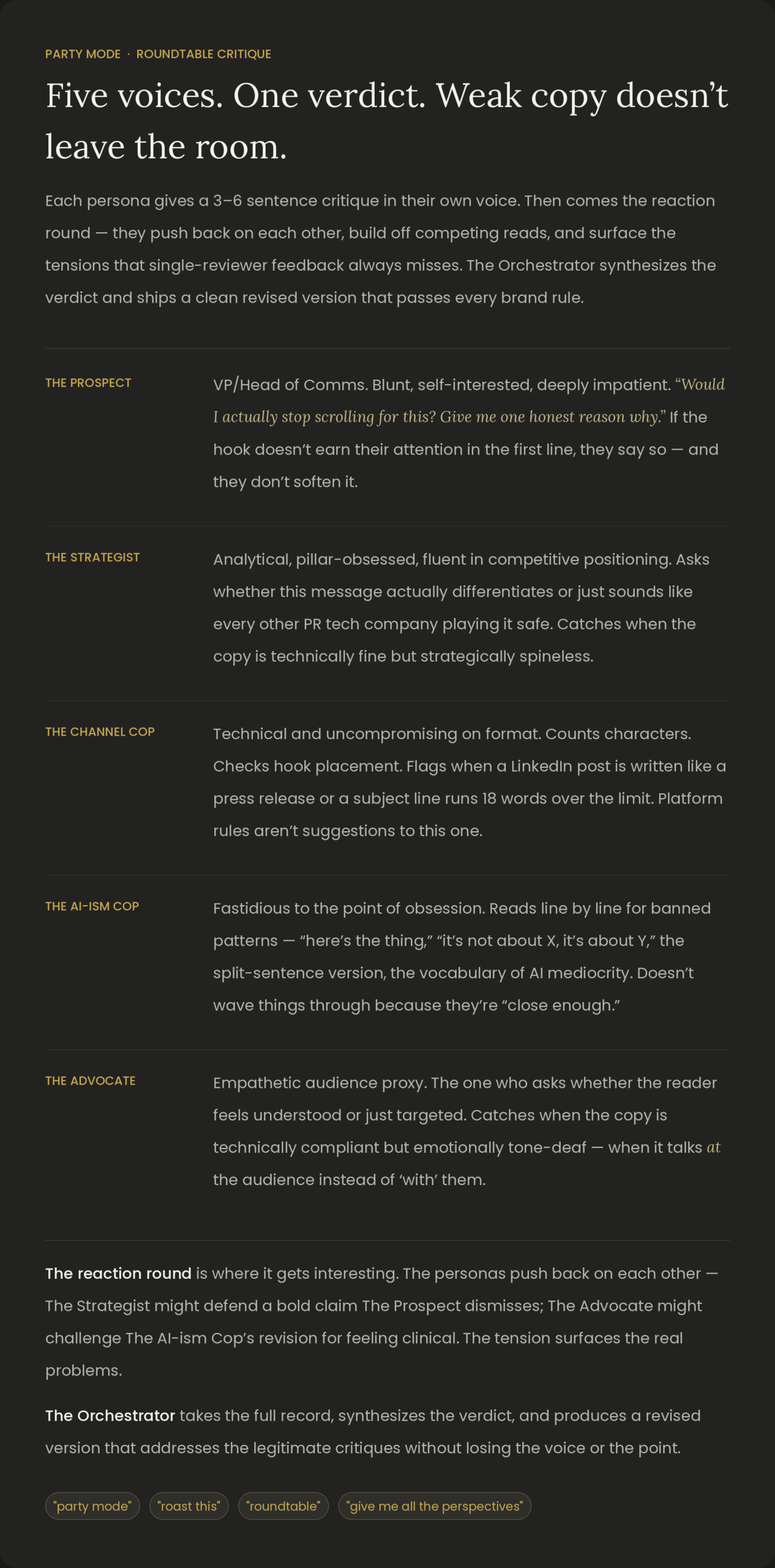

- Built and documented the roundtable multi-agent critique system, including persona voice profiles, reaction round mechanics, and Orchestrator synthesis logic.

- Wrote the skill’s self-compliance scan — the mandatory pre-delivery check that catches “here’s” phrases, banned contrast constructions, Title Case violations, and flagged vocabulary before content reaches the user.

- Tested outputs across all five modes and iterated on prompt structure to reduce the most common failure patterns (AI-isms, Title Case drift, pillar misalignment).

- Wrote the install guide for team onboarding, including trigger patterns, mode selection logic, and what the skill does not handle.

Party mode: a multi-agent critique roundtable that tears the work apart before it leaves the room.

Inspired by BMAD’s multi-agent workflow, I built a second layer into the skill: a roundtable of five AI personas who critique content from completely different angles simultaneously — then argue with each other before the final version ships.

Outcomes.

- Institutional knowledge, made portable: Brand expertise that lived in a few heads now runs in a deployable system any team member can access.

- Five modes, one consistent voice: From campaign briefs to asset expansion kits to video scripts — every output format anchored to the same messaging framework.

- Multi-angle critique before delivery: The roundtable model catches what a single reviewer always misses — strategic spinelessness, platform violations, emotional flatness.

- Compliance baked in: Mandatory self-review runs before every output. The skill catches its own AI-isms, Title Case drift, and banned patterns before they reach a human editor.